Kevin Ward, Petrosys Europe

In Petrosys PRO 2020.1 new functionality has been added to improve quality and efficiency when mapping faulted horizons.

Often tricky and problematic for geoscientists, I thought it would make sense to write a short summary of how we see these improvements fitting into the wider workflows for today’s geoscientists and ask for your thoughts on what else we could be doing to make your life easier.

The challenge comes from how we interpret seismic horizons near to faults.

In the past, resolution was the main issue. What I’ve seen in the industry more recently is that imaging is not as problematic as it used to be, however, interpreting around faults is still an issue due to the prevalence of autotracking and AI/ML workflows.

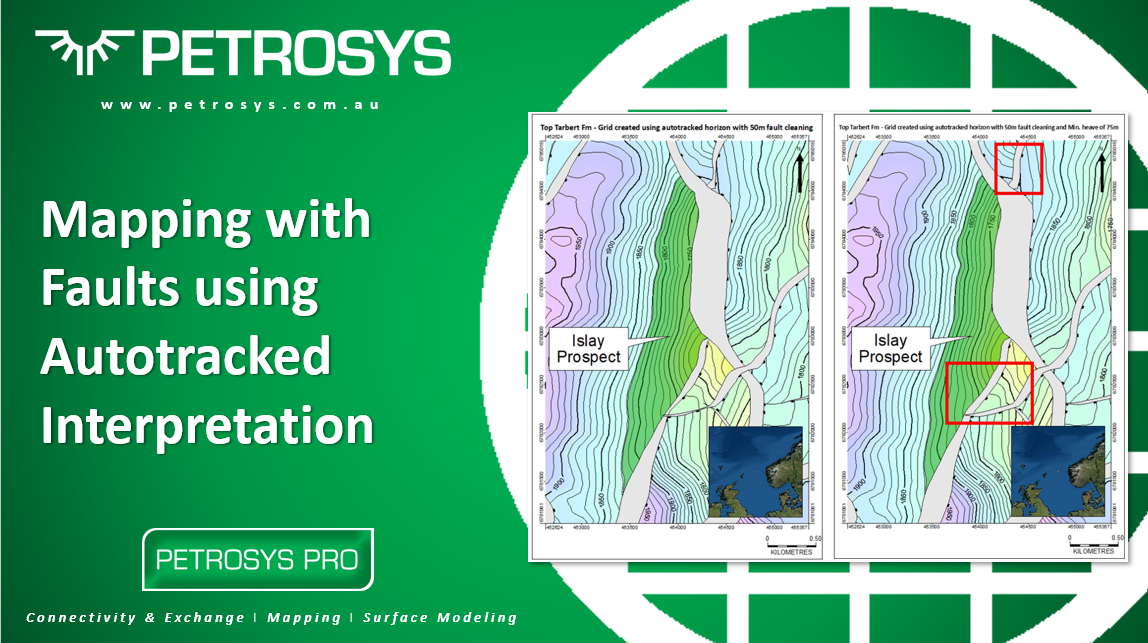

Figure 1: Seismic section with some sketch interpretations. The Yellow line is a fault. The Blue line is indicative of human interpretation. The orange line is indicative of autotracked interpretation

In Figure 1, for example, the imaging around this fault is pretty good. A human would know to interpret the horizon up to and ‘snap’ [the blue line] onto the edge of the yellow fault. However, when using (even some of the more sophisticated) autotracking technology, without manual intervention, there is a tendency for the horizon to be continuous and ‘bend’ [the orange line] through the yellow fault zone.

The problem

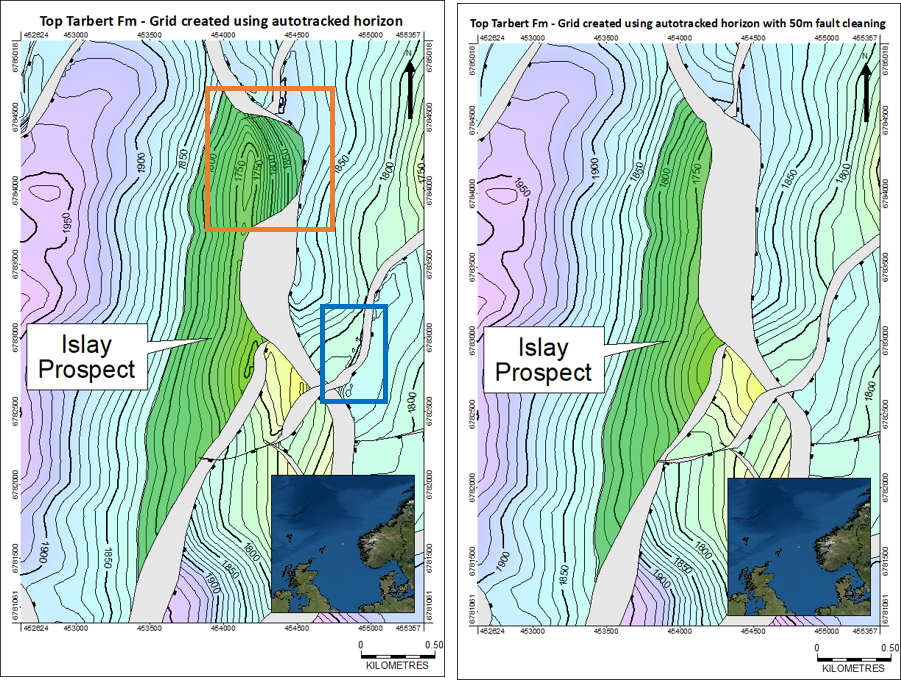

When you use mapping technology to generate faulted surfaces from the autotracked horizons, it creates a twofold problem:

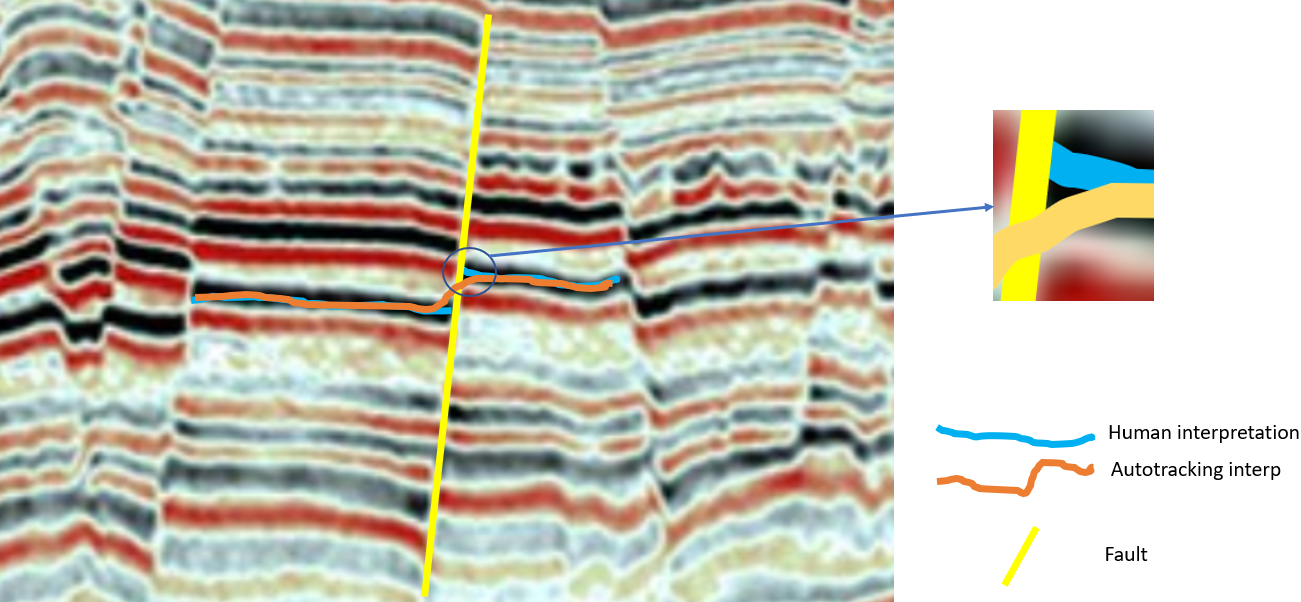

- Firstly, tools which convert fault sticks to fault polygons will struggle because there is no physical separation of the horizon to define the footwall and hangingwall of the fault [see orange box in Figure 2].

- Secondly, the artificial ‘bend’ in the horizon will lead to erroneous data points and hence artefacts near to the fault [see blue box in Figure 1].

It’s difficult to put this into words so, as I usually do, let me try to show this using a map….

Figure 2: Creating a grid using seismic interp and fault sticks as input. Left: The ‘human-picked’ interp where there is a gap at the hangingwall and footwall intersections. Right: The ‘autotracked’ interp with no gaps at the faults

The solution

One of the new features for PRO 2020.1 is the ability to remove input data points within a user-defined buffer around faults. This solves both of the original problems:

- Firstly, it creates a physical separation in the hangingwall and footwall horizon-fault intersections, generating more realistic fault polygons.

- Secondly, the surface generated near to and within the fault polygon is more realistic as the erroneous data points are now excluded.

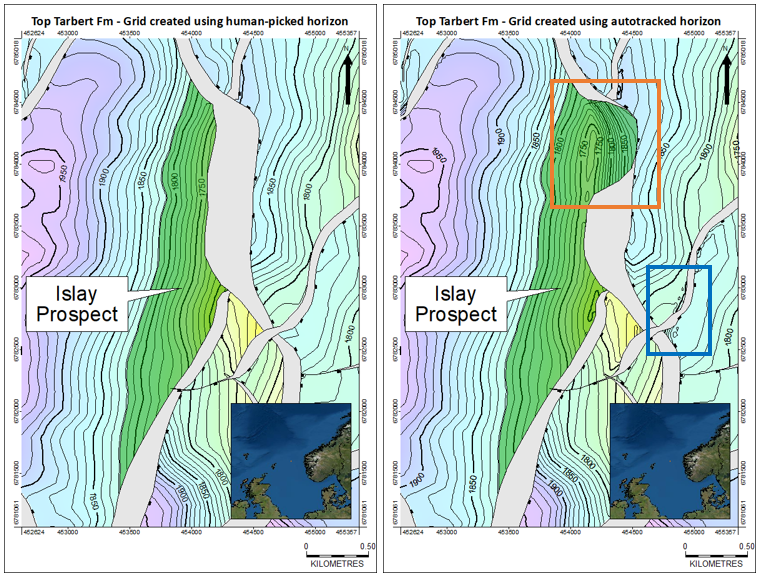

Of course, in reality, geology never has a ‘one-fits-all’ solution but by varying the user-defined buffer and making use of powerful workflow functionality, it’s fairly straightforward to generate some iterations and decide on the best way forward without any manual intervention. This is shown in Figure 3 below, where the image on the right no longer suffers from the collapsed fault polygon or artefacts that the original ‘autotracked’ surface had problems with.

Figure 3 – Cleaning the input data around the faults. Left: The ‘autotracked’ interp. Right: The ‘autotracked’ interp but this time using a 50m fault cleaning buffer

The difference

But does this functionality actually make a difference? From a subjective point of view I would argue in Figure 3 the map on the right gives a much clearer and more accurate representation of the prospect than the map on the left (i.e. the fault cleaning has done a good job).

There is also a difference in the volumes of the prospect in each map as the closure behaves differently in each. It’s difficult to use volumetric evidence alone to say which volumetric number is the best one to use. The point, however, is that some sound technical thinking about how the horizon actually interacts with the fault in the subsurface, combined with access to some powerful technology of course, will lead to a meaningful improvement in the accuracy of the prospect volumetric calculation.

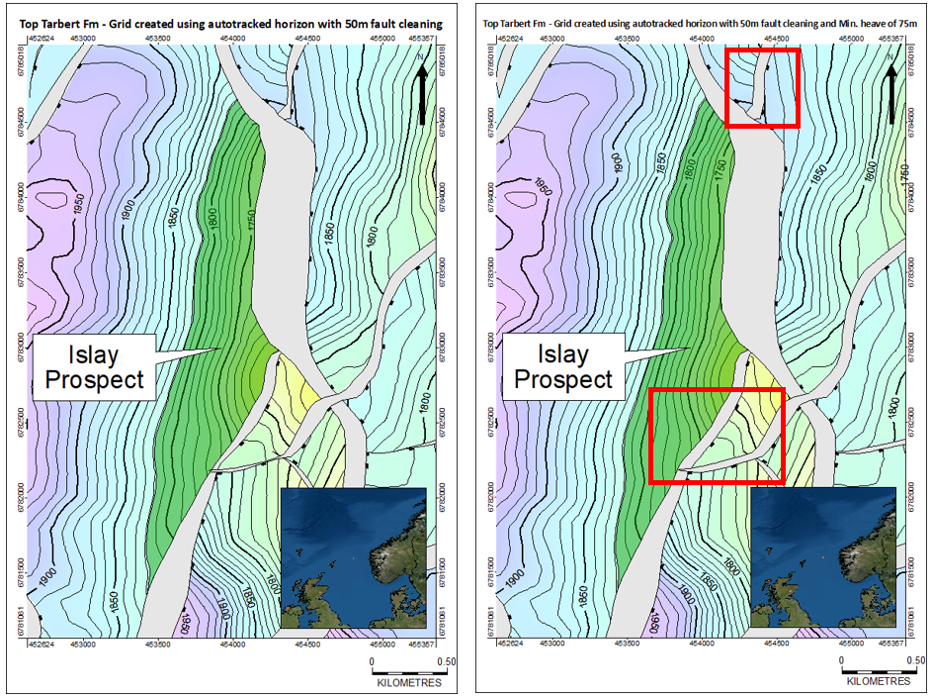

Taking it a step further

We ran this functionality over some real-world data and presented it back to the client who had provided the data. In addition to some fine-tuning of the functionality above, it was also suggested that the raw fault polygons may not be useable for further operations. For example, the narrow fault polygons may be too thin to satisfy the requirements of an upscaled dynamic simulation model. Also, the thin heave in the raw polygon might not capture the full extent of the area of deformation. We therefore also added in some control on the heave of the fault polygon being generated.

Figure 4 – The ‘minimum heave’ option. Left: no minimum heave specified. Right: A minimum heave has been chosen. Notice the fault has widened in areas where the original heave is less than the chosen distance but identical in areas which already had wider heave

As you can see in the maps above, the user has the ability to use the raw heave (from the horizon to fault interpretation) or alternatively specify minimum heaves to suit future operations.

Summary

In my opinion, mapping in faulted horizons is going to continue to be problematic for geoscientists, even if the types of problems evolve as we get further into automated, scanning technology. Petrosys PRO has always had some tangible strengths in mapping of faulted horizons and I believe the changes we’ve made for PRO 2020.1 will allow this to continue for modern interpretation workflows. Even better, as they are part of the ‘surface modelling’ module, they can be added to workflow so that manual interaction is kept to a minimum, which is the whole point of autotracking in the first place.

However, these are just my thoughts. What are your thoughts on mapping in faulted horizons? The idea to allow widening of fault polygons only came about late on in the development phase so there may be other problems out there that we need to be solving for our industry – I’m looking forward to discussing what they are.

Get in touch

If you would like to know more about Petrosys PRO contact our team of mapping gurus.