by Kevin Ward, Support Manager & Sales Geoscientist Petrosys

Let us suppose that you are planning to drill a new well in a new location. You’ve made a great presentation about how thick your key reservoir zone is going to be, but someone puts their hand up and says:

Let us suppose that you are planning to drill a new well in a new location. You’ve made a great presentation about how thick your key reservoir zone is going to be, but someone puts their hand up and says:

“How confident are you that the reservoir is that thick? Are you sure you could not have got a more accurate model by using a different gridding algorithm? What about your current interpretation picks, are you sure they’re all accurate?”

For most teams, a subjective debate would now ensue about the interpreters’ models. However, for those who have cross-validation functionality, a more objective and statistical response could be shown.

I’ll answer these specific questions in a moment but first, here’s some background ….

Background

Cross-validation is simply the process of removing known data points sequentially and comparing the result to the model created with all known data points. Or to explain another way:

a. Let’s say we have 100 wells numbered from 1 to 100.

b. We generate a surface (depth grid, thickness grid, porosity grid, etc.) with all 100 wells

c. Well number 1 is now excluded and we generate a surface using the same algorithms/parameters using wells 2-100 as the input data points

d. The surface from step ‘b’ is then compared statistically to the surface from step ‘c’

e. Well number 2 is now excluded and we generate a surface using the same algorithms/parameters using well 1 plus wells 3-100 as the input data points

f. The surface from step ‘e’ is then compared statistically to the surface from step ‘c’

g. The process of excluding a well and then comparing to the surface from step ‘c’ is repeated for the remaining wells.

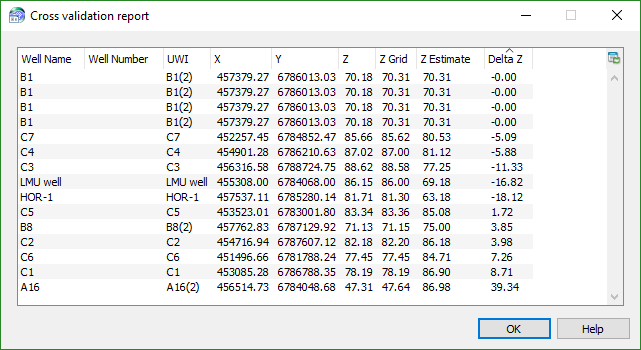

h. A report similar to below is generated to show some key statistics.

“How confident are you that the reservoir is that thick?”

To answer this question we would want to look at the ‘delta z’ column more closely. The ‘delta z’ column shows the difference (in z-value units) between the surface from step ‘c’ compared to the surface with the named well excluded. To put this another way, it says ‘let’s suppose we had not drilled this well yet. How close would the algorithms and parameters have predicted the actual z value’.

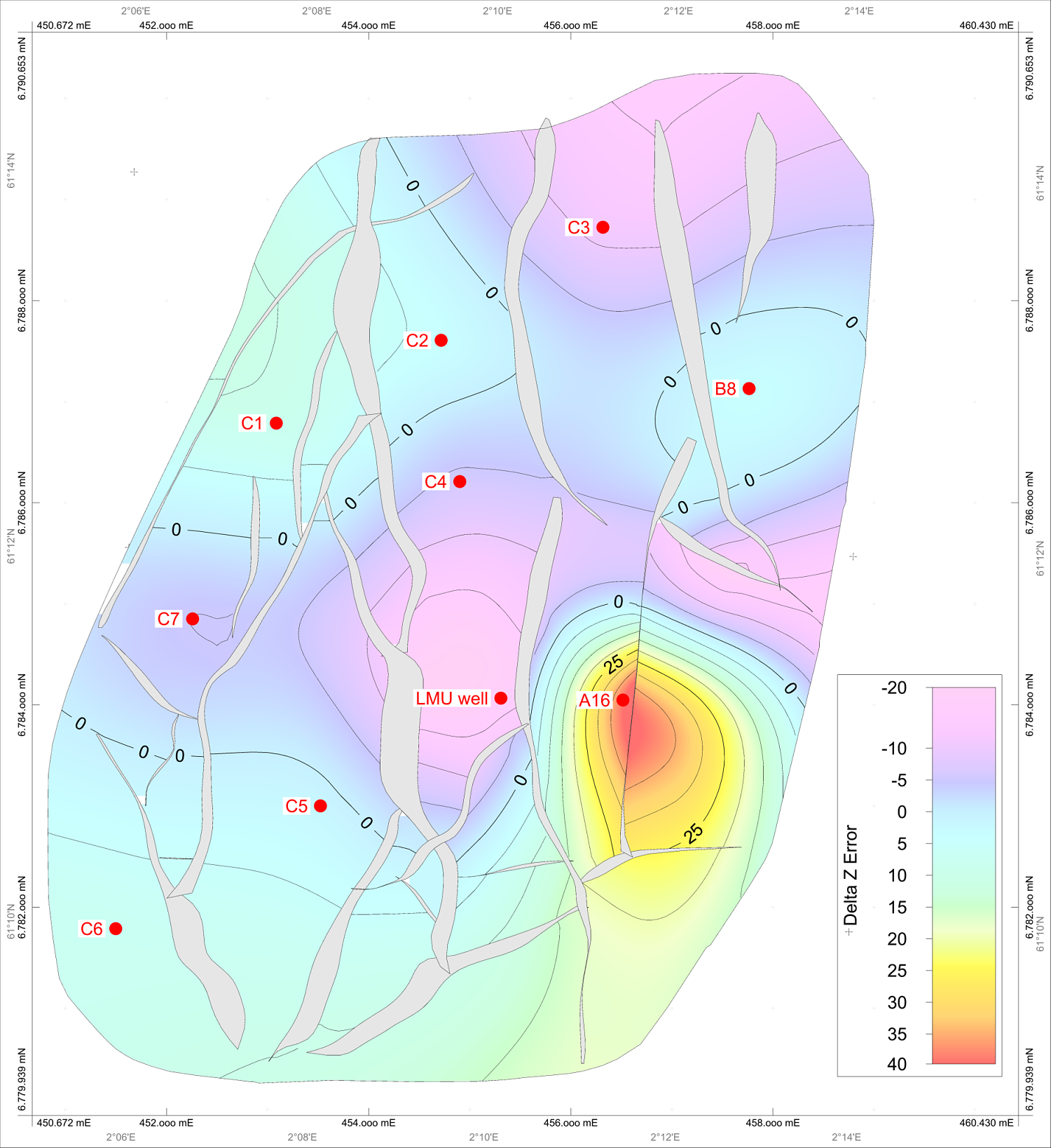

You could also map the Delta Z points, as is shown below. This map now answers the question spatially. For example, it says the model did not do a good job of predicting the z value around the ‘A16’ well area. In fact had we not drilled this well, our model would have been about 30-40m out. Now that we have well A16 our future predictions may be better but this area shown on the map should cause concern. On the flip side, if we were going to drill a well near to the C1/C2 wells, we could say we are confident the model is predicting the z values accurately in this area.

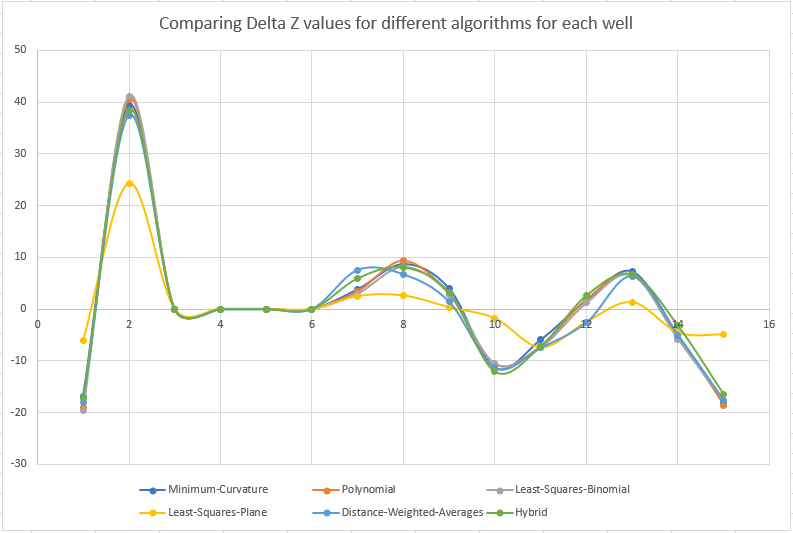

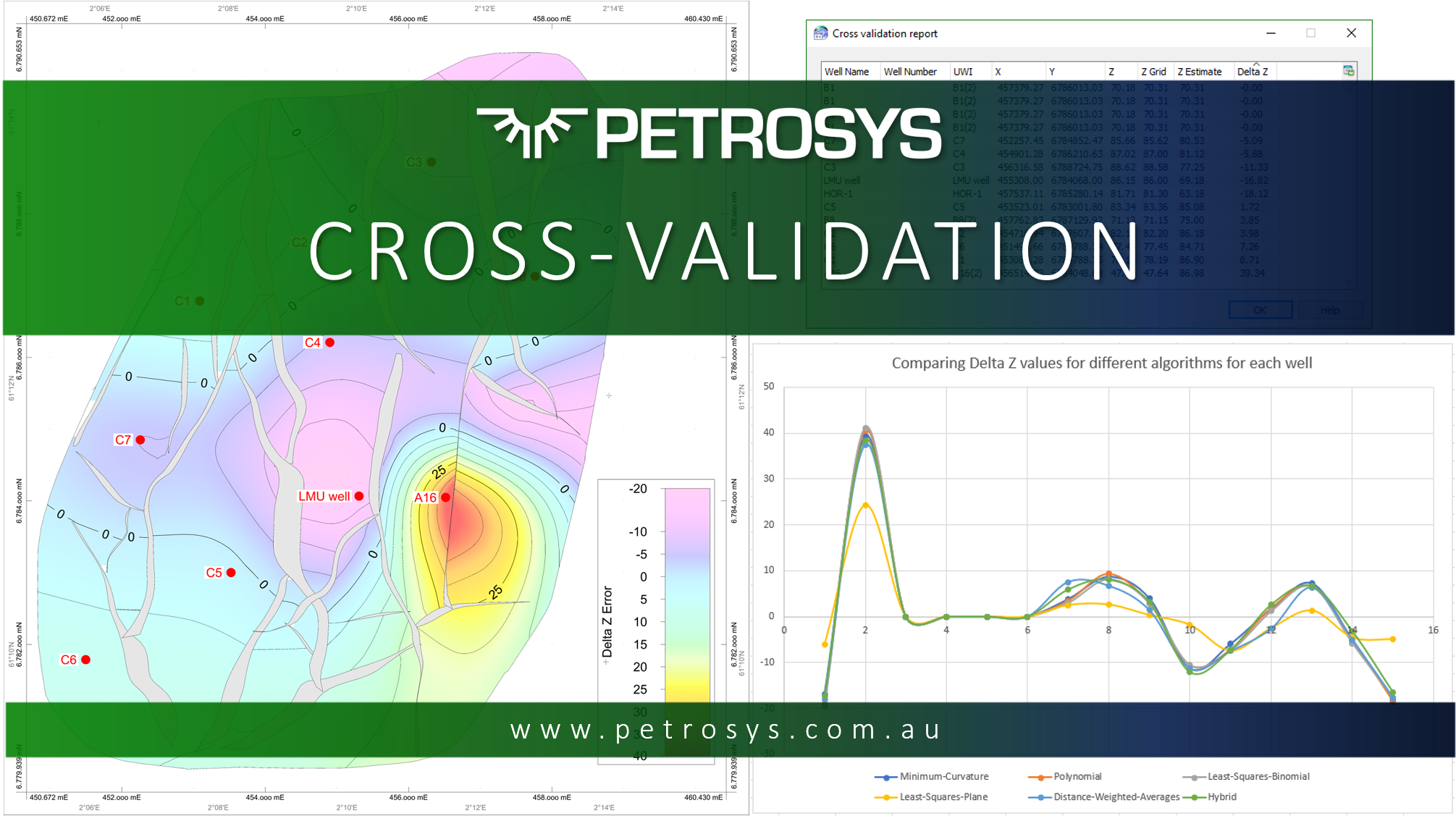

“Are you sure you could not have got a more accurate model by using a different gridding algorithm?”

Delta Z will again allow us to answer this question. However, this time we need to compare the delta z to the results using different parameters (in this case, the algorithm). There are many ways to do this comparison but the logic is that the algorithm with the overall lowest Delta z, is the algorithm that is best able to predict the surface.

Here we can see that the ‘Least-Squares-Plane’ algorithm is doing the best job overall. Of course, now we need to view the ‘Least-Squares-Plane’ surface and make sure it looks correct geologically!

“What about your current interpretation points, are you sure they’re all accurate?”

This was actually the initial reason we developed the cross-validation tool here at Petrosys. A client had 1000s of wells which had been interpreted by many different people over many years (and many of whom no longer worked for the company). So how do you determine which wells have good interpretation and which have erroneous interp? Again, the cross-validation report gives you some guidance. The dataset I have here is too small to be used for this purpose (you may as well just manually look into the interpretation of all 15 of the wells) but imagine you have 1000 wells. The cross-validation report may show low Delta Z in 980 of those points but high values in 20 of them. You now only have 20 wells which warrant further investigation!

What else can this be used for?

This is where I am reaching out to you (if you’re still reading 😉). Where do we take this tool next? We’ve already extended it into Well Time Vs. Depth trend analysis so are there other stages of the depth conversion process that could benefit from a better understanding of error? Maybe in the well tie stages? Or are there other areas of E&P work where we’ve missed a good opportunity to improve success? As I mentioned, it has been developed and tuned to fit a workflow involving well data. Perhaps it would be useful in gridding with 2D or 3D seismic?

Interested to hear your views.